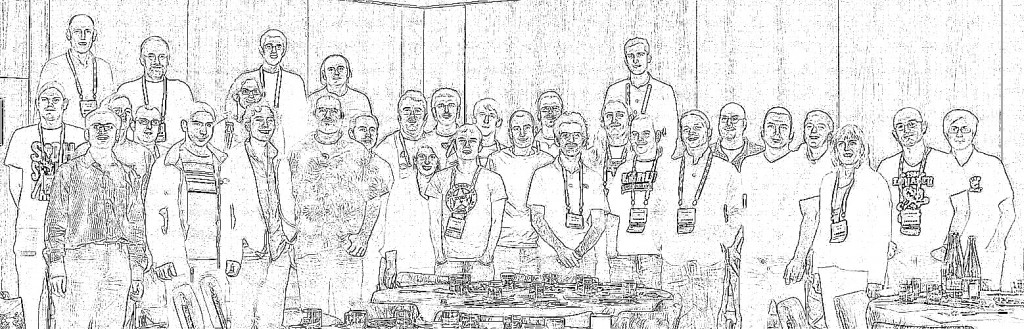

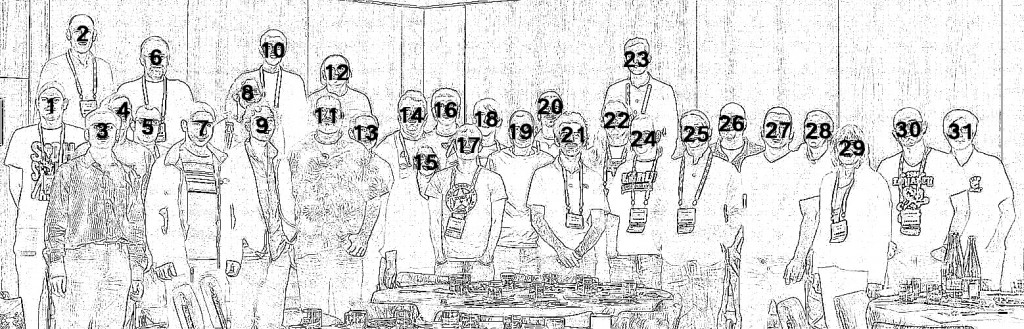

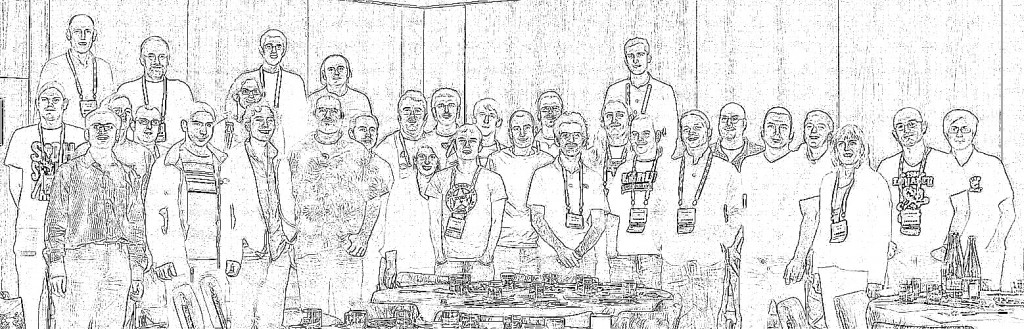

Two weeks back, I was in Barcelona for LinuxCon Europe / KVM Forum 2012. While there Jeff Cody acquired a photo of many of the KVM community developers. Although already visible on Google+, along with tags to identify all the faces, I wanted to put up an outline view of the photo too, mostly so that I could then write this blog post describing how to create the head outline :-) The steps on this page were all performed using Fedora 17 and GIMP 2.8.2, but this should work with pretty much every version of GIMP out there since there’s nothing fancy going on.

The master photo

The master photo that we’ll be working with is

Step 1: Edge detect

It was thought that one of the edge detection algorithms available in GIMP would be a good basis for providing a head outline. After a little trial & error, I picked ‘Filters -> Edge-detect -> Edge..’, then chose the ‘Laplace’ algorithm.

This resulted in the following image

Step 2: Invert colours

The previous image shows the outlines quite effectively, but my desire is for a primarily white image, with black outlines. This is easily achieved using the menu option ‘Colours -> Invert’

Step 3: Desaturate

The edge detection algorithm leaves some colour artifacts in the images, which are trivially dealt with by desaturating the image using ‘Colours -> Desaturate…’ and any one of the desaturation algorithms GIMP offers.

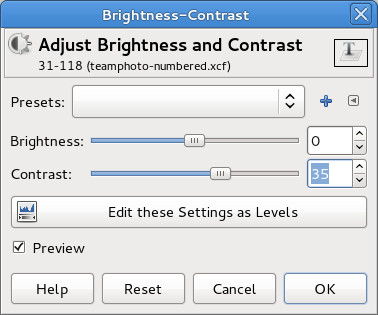

Step 4: Boost contrast

The outline looks pretty good, but there is still a fair amount of fine detail “noise”. There are a few ways we might get rid of this – in particular some of GIMPs noise removal filters. I went for the easy option of simply boosting the overall image contrast, using ‘Colours -> Brightness/Contrast…’

For this image, setting the contrast to ’40’ worked well, vary according to the particular characteristics of the image

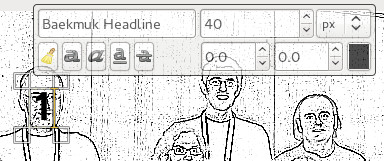

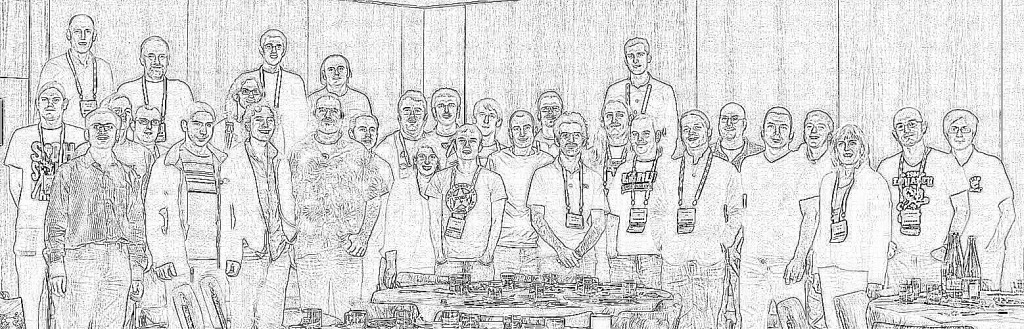

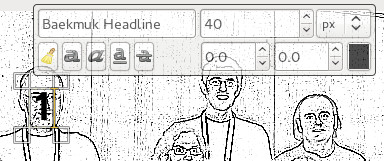

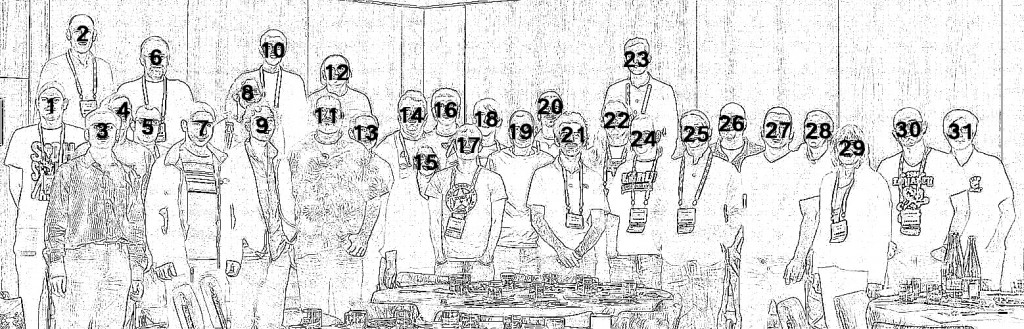

Step 5: Add numbers

The outline view is where we want to be, but the whole point of the exercise is to make it easy to put names to faces. Thus the final step is to simply number each head. GIMP’s text tool is the perfect way to do this, just click on each face in turn and type in a number.

No need to worry about perfect placement, since each piece of text becomes a new layer. Once done, the layer positions can be moved around to fit well.

And that’s the final image completed. In the page I created on the KVM website, a little javascript handled swapping between the original & outline views on mouse over, but that’s all there is to it. The hardest part of the whole exercise is actually remembering who everyone is :-P

As I mentioned in my previous post, I’m not really a fan of giant shell scripts which ask for unrestricted sudo access without telling you what they’re going todo. Unfortunately DevStack is one such script :-( So I decided to investigate just what it does to a Fedora 17 host when it is run. The general idea I had was

- Install a generic Fedora 17 guest

- Create a QCow2 image using the installed image as its backing file

- Reconfigure the guest to use the QCow2 image as its disk

- Run DevStack in the guest

- Compare the contents of the original installed image and the DevStack processed image

It sounded like libguestfs ought to be able to help out with the last step, and after a few words with Rich, I learnt about use of virt-ls for exactly this purpose. After trying this once, it quickly became apparent that just comparing the lists of files is quite difficult because DevStack installs a load of extra RPMs with many 1000’s of files. So to take this out of the equation, I grabbed the /var/log/yum.log file to get a list of all RPMs that DevStack had installed, and manually added them into the generic Fedora 17 guest base image. Now I could re-run DevStack again and do a file comparison which excluded all the stuff installed by RPM.

RPM packages installed (with YUM)

- apr-1.4.6-1.fc17.x86_64

- apr-util-1.4.1-2.fc17.x86_64

- apr-util-ldap-1.4.1-2.fc17.x86_64

- augeas-libs-0.10.0-3.fc17.x86_64

- binutils-2.22.52.0.1-10.fc17.x86_64

- boost-1.48.0-13.fc17.x86_64

- boost-chrono-1.48.0-13.fc17.x86_64

- boost-date-time-1.48.0-13.fc17.x86_64

- boost-filesystem-1.48.0-13.fc17.x86_64

- boost-graph-1.48.0-13.fc17.x86_64

- boost-iostreams-1.48.0-13.fc17.x86_64

- boost-locale-1.48.0-13.fc17.x86_64

- boost-program-options-1.48.0-13.fc17.x86_64

- boost-python-1.48.0-13.fc17.x86_64

- boost-random-1.48.0-13.fc17.x86_64

- boost-regex-1.48.0-13.fc17.x86_64

- boost-serialization-1.48.0-13.fc17.x86_64

- boost-signals-1.48.0-13.fc17.x86_64

- boost-system-1.48.0-13.fc17.x86_64

- boost-test-1.48.0-13.fc17.x86_64

- boost-thread-1.48.0-13.fc17.x86_64

- boost-timer-1.48.0-13.fc17.x86_64

- boost-wave-1.48.0-13.fc17.x86_64

- ceph-0.44-5.fc17.x86_64

- check-0.9.8-5.fc17.x86_64

- cloog-ppl-0.15.11-3.fc17.1.x86_64

- cpp-4.7.2-2.fc17.x86_64

- curl-7.24.0-5.fc17.x86_64

- Django-1.4.2-1.fc17.noarch

- django-registration-0.7-3.fc17.noarch

- dmidecode-2.11-8.fc17.x86_64

- dnsmasq-utils-2.63-1.fc17.x86_64

- ebtables-2.0.10-5.fc17.x86_64

- euca2ools-2.1.1-2.fc17.noarch

- gawk-4.0.1-1.fc17.x86_64

- gcc-4.7.2-2.fc17.x86_64

- genisoimage-1.1.11-14.fc17.x86_64

- git-1.7.11.7-1.fc17.x86_64

- glusterfs-3.2.7-2.fc17.x86_64

- glusterfs-fuse-3.2.7-2.fc17.x86_64

- gnutls-utils-2.12.17-1.fc17.x86_64

- gperftools-libs-2.0-5.fc17.x86_64

- httpd-2.2.22-4.fc17.x86_64

- httpd-tools-2.2.22-4.fc17.x86_64

- iptables-1.4.14-2.fc17.x86_64

- ipxe-roms-qemu-20120328-1.gitaac9718.fc17.noarch

- iscsi-initiator-utils-6.2.0.872-18.fc17.x86_64

- kernel-headers-3.6.6-1.fc17.x86_64

- kpartx-0.4.9-26.fc17.x86_64

- libaio-0.3.109-5.fc17.x86_64

- libcurl-7.24.0-5.fc17.x86_64

- libmpc-0.9-2.fc17.2.x86_64

- libunwind-1.0.1-3.fc17.x86_64

- libusal-1.1.11-14.fc17.x86_64

- libvirt-0.9.11.7-1.fc17.x86_64

- libvirt-client-0.9.11.7-1.fc17.x86_64

- libvirt-daemon-0.9.11.7-1.fc17.x86_64

- libvirt-daemon-config-network-0.9.11.7-1.fc17.x86_64

- libvirt-daemon-config-nwfilter-0.9.11.7-1.fc17.x86_64

- libvirt-python-0.9.11.7-1.fc17.x86_64

- libwsman1-2.2.7-5.fc17.x86_64

- lzop-1.03-4.fc17.x86_64

- m2crypto-0.21.1-8.fc17.x86_64

- mod_wsgi-3.3-2.fc17.x86_64

- mx-3.2.3-1.fc17.x86_64

- mysql-5.5.28-1.fc17.x86_64

- mysql-libs-5.5.28-1.fc17.x86_64

- MySQL-python-1.2.3-5.fc17.x86_64

- mysql-server-5.5.28-1.fc17.x86_64

- netcf-libs-0.2.2-1.fc17.x86_64

- numad-0.5-4.20120522git.fc17.x86_64

- numpy-1.6.2-1.fc17.x86_64

- parted-3.0-10.fc17.x86_64

- perl-AnyEvent-5.27-7.fc17.noarch

- perl-AnyEvent-AIO-1.1-8.fc17.noarch

- perl-AnyEvent-BDB-1.1-7.fc17.noarch

- perl-Async-MergePoint-0.03-7.fc17.noarch

- perl-BDB-1.88-5.fc17.x86_64

- perl-common-sense-3.5-1.fc17.noarch

- perl-Compress-Raw-Bzip2-2.052-1.fc17.x86_64

- perl-Compress-Raw-Zlib-2.052-1.fc17.x86_64

- perl-Config-General-2.50-6.fc17.noarch

- perl-Coro-6.07-3.fc17.x86_64

- perl-Curses-1.28-5.fc17.x86_64

- perl-DBD-MySQL-4.020-2.fc17.x86_64

- perl-DBI-1.617-1.fc17.x86_64

- perl-Encode-Locale-1.02-5.fc17.noarch

- perl-Error-0.17016-7.fc17.noarch

- perl-EV-4.03-8.fc17.x86_64

- perl-Event-1.20-1.fc17.x86_64

- perl-Event-Lib-1.03-16.fc17.x86_64

- perl-Git-1.7.11.7-1.fc17.noarch

- perl-Glib-1.241-2.fc17.x86_64

- perl-Guard-1.022-1.fc17.x86_64

- perl-Heap-0.80-10.fc17.noarch

- perl-HTML-Parser-3.69-3.fc17.x86_64

- perl-HTML-Tagset-3.20-10.fc17.noarch

- perl-HTTP-Date-6.00-3.fc17.noarch

- perl-HTTP-Message-6.03-1.fc17.noarch

- perl-IO-AIO-4.15-1.fc17.x86_64

- perl-IO-Async-0.29-7.fc17.noarch

- perl-IO-Compress-2.052-1.fc17.noarch

- perl-IO-Socket-SSL-1.66-1.fc17.noarch

- perl-IO-Tty-1.10-5.fc17.x86_64

- perl-LWP-MediaTypes-6.01-4.fc17.noarch

- perl-Net-HTTP-6.02-2.fc17.noarch

- perl-Net-LibIDN-0.12-8.fc17.x86_64

- perl-Net-SSLeay-1.48-1.fc17.x86_64

- perl-POE-1.350-2.fc17.noarch

- perl-Socket6-0.23-8.fc17.x86_64

- perl-Socket-GetAddrInfo-0.19-1.fc17.x86_64

- perl-TermReadKey-2.30-14.fc17.x86_64

- perl-TimeDate-1.20-6.fc17.noarch

- perl-URI-1.60-1.fc17.noarch

- ppl-0.11.2-8.fc17.x86_64

- ppl-pwl-0.11.2-8.fc17.x86_64

- pylint-0.25.1-1.fc17.noarch

- python-amqplib-1.0.2-3.fc17.noarch

- python-anyjson-0.3.1-3.fc17.noarch

- python-babel-0.9.6-3.fc17.noarch

- python-BeautifulSoup-3.2.1-3.fc17.noarch

- python-boto-2.5.2-1.fc17.noarch

- python-carrot-0.10.7-4.fc17.noarch

- python-cheetah-2.4.4-2.fc17.x86_64

- python-cherrypy-3.2.2-1.fc17.noarch

- python-coverage-3.5.1-0.3.b1.fc17.x86_64

- python-crypto-2.6-1.fc17.x86_64

- python-dateutil-1.5-3.fc17.noarch

- python-devel-2.7.3-7.2.fc17.x86_64

- python-docutils-0.8.1-3.fc17.noarch

- python-eventlet-0.9.17-1.fc17.noarch

- python-feedparser-5.1.2-2.fc17.noarch

- python-gflags-1.5.1-2.fc17.noarch

- python-greenlet-0.3.1-11.fc17.x86_64

- python-httplib2-0.7.4-6.fc17.noarch

- python-iso8601-0.1.4-4.fc17.noarch

- python-jinja2-2.6-2.fc17.noarch

- python-kombu-1.1.3-2.fc17.noarch

- python-lockfile-0.9.1-2.fc17.noarch

- python-logilab-astng-0.23.1-1.fc17.noarch

- python-logilab-common-0.57.1-2.fc17.noarch

- python-lxml-2.3.5-1.fc17.x86_64

- python-markdown-2.1.1-1.fc17.noarch

- python-migrate-0.7.2-2.fc17.noarch

- python-mox-0.5.3-4.fc17.noarch

- python-netaddr-0.7.5-4.fc17.noarch

- python-nose-1.1.2-2.fc17.noarch

- python-paramiko-1.7.7.1-2.fc17.noarch

- python-paste-deploy-1.5.0-4.fc17.noarch

- python-paste-script-1.7.5-4.fc17.noarch

- python-pep8-1.0.1-1.fc17.noarch

- python-pip-1.0.2-2.fc17.noarch

- python-pygments-1.4-4.fc17.noarch

- python-qpid-0.18-1.fc17.noarch

- python-routes-1.12.3-3.fc17.noarch

- python-setuptools-0.6.27-2.fc17.noarch

- python-sphinx-1.1.3-1.fc17.noarch

- python-sqlalchemy-0.7.9-1.fc17.x86_64

- python-suds-0.4.1-2.fc17.noarch

- python-tempita-0.5.1-1.fc17.noarch

- python-unittest2-0.5.1-3.fc17.noarch

- python-virtualenv-1.7.1.2-2.fc17.noarch

- python-webob-1.1.1-2.fc17.noarch

- python-wsgiref-0.1.2-8.fc17.noarch

- pyxattr-0.5.1-1.fc17.x86_64

- PyYAML-3.10-3.fc17.x86_64

- qemu-common-1.0.1-2.fc17.x86_64

- qemu-img-1.0.1-2.fc17.x86_64

- qemu-system-x86-1.0.1-2.fc17.x86_64

- qpid-cpp-client-0.18-5.fc17.x86_64

- qpid-cpp-server-0.18-5.fc17.x86_64

- radvd-1.8.5-3.fc17.x86_64

- screen-4.1.0-0.9.20120314git3c2946.fc17.x86_64

- scsi-target-utils-1.0.24-6.fc17.x86_64

- seabios-bin-1.7.1-1.fc17.noarch

- sg3_utils-1.31-2.fc17.x86_64

- sgabios-bin-0-0.20110622SVN.fc17.noarch

- spice-server-0.10.1-5.fc17.x86_64

- sqlite-3.7.11-3.fc17.x86_64

- tcpdump-4.2.1-3.fc17.x86_64

- vgabios-0.6c-4.fc17.noarch

- wget-1.13.4-7.fc17.x86_64

- xen-libs-4.1.3-5.fc17.x86_64

- xen-licenses-4.1.3-5.fc17.x86_64

Python packages installed (with PIP)

These all ended up in /usr/lib/python2.7/site-packages :-(

- WebOp

- amqplib

- boto (splattering over existing boto RPM package with older version)

- cinderclient

- cliff

- cmd2

- compressor

- django_appconf

- django_compressor

- django_openstack_auth

- glance

- horizon

- jsonschema

- keyring

- keystoneclient

- kombu

- lockfile

- nova

- openstack_auth

- pam

- passlib

- prettytable

- pyparsing

- python-cinderclient

- python-glanceclient

- python-novaclient

- python-openstackclient

- python-quantumclient

- python-swiftclient

- pytz

- quantumclient

- suds

- swiftclient

- warlock

- webob

Files changed

- /etc/group (added $USER to ‘libvirtd’ group)

- /etc/gshadow (as above)

- /etc/httpd/conf/httpd.conf (changes Listen 80 to Listen 0.0.0.0:80)

- /usr/lib/python2.7/site-packages/boto (due to overwriting RPM provided boto)

- /usr/bin/cq (as above)

- /usr/bin/elbadmin (as above)

- /usr/bin/list_instances (as above)

- /usr/bin/lss3 (as above)

- /usr/bin/route53 (as above)

- /usr/bin/s3multiput (as above)

- /usr/bin/s3put (as above)

- /usr/bin/sdbadmin (as above)

Files created

- /etc/cinder/*

- /etc/glance/*

- /etc/keystone/*

- /etc/nova/*

- /etc/httpd/conf.d/horizon.conf

- /etc/polkit-1/localauthority/50-local.d/50-libvirt-reomte-access.pkla

- /etc/sudoers.d/50_stack_sh

- /etc/sudoers.d/cinder-rootwrap

- /etc/sudoers.d/nova-rootwrap

- $DEST/cinder/*

- $DEST/data/*

- $DEST/glance/*

- $DEST/horizon/*

- $DEST/keystone/*

- $DEST/noVNC/*

- $DEST/nova/*

- $DEST/python-cinderclient/*

- $DEST/python-glanceclient/*

- $DEST/python-keystoneclient/*

- $DEST/python-novaclient/*

- $DEST/python-openstackclient/*

- /usr/bin/cinder*

- /usr/bin/glance*

- /usr/bin/keystone*

- /usr/bin/nova*

- /usr/bin/openstack

- /usr/bin/quantum

- /usr/bin/swift

- /var/cache/cinder/*

- /var/cache/glance/*

- /var/cache/keystone/*

- /var/cache/nova/*

- /var/lib/mysql/cinder/*

- /var/lib/mysql/glance/*

- /var/lib/mysql/keystone/*

- /var/lib/mysql/mysql/*

- /var/lib/mysql/nova/*

- /var/lib/mysql/performance_schema/*

Thoughts on installation

As we can see from the details above, DevStack does a very significant amount of work as root using sudo. I had fully expected that it was installing RPMs as root, but I had not counted on it adding extra python modules into /usr/lib/python-2.7, nor the addition of files in /etc/, /var or /usr/bin. I had set the $DEST environment variable for DevStack naively assuming that it would cause it to install everything possible under that location. In fact the $DEST variable was only used to control where the GIT checkouts of each openstack component went, along with a few misc files in $DEST/files/

IMHO a development environment setup tool should do as little as humanely possible as root. From the above list of changes, the only things that I believe justify use of sudo privileges are:

- Installation of RPMs from standard YUM repositories

- Installation of /etc/sudoers.d/ config files

- Installation of /etc/polkit file to grant access to libvirtd

Everything else is capable of being 100% isolated from the rest of the OS, under the $DEST directory location. Taking that into account my preferred development setup would be

$DEST

+- nova (GIT checkout)

+- ...etc... (GIT checkout)

+- vroot

+- bin

| +- nova

| +- ...etc...

+- etc

| +- nova

| +- ...etc...

+- lib

| +- python2.7

| +- site-packages

| +- boto

| +- ...etc...

+- var

+- cache

| +- nova

| +- ...etc...

+- lib

+- mysql

This would imply running a private copy of qpid, mysql and httpd, ideally all inside the same screen session as the rest of the OpenStack services, using unprivileged ports. Even if we relied on the system instances of qpid, mysql, httpd and did a little bit more privileged config, 95% of the rest of the stuff DevStack does as root, could still be kept unprivileged. I am also aware that current OpenStack code may not be amenable to installation in locations outside of / by default, but the code is all there to be modified to cope with arbitrary install locations if desired/required.

My other wishlist item for DevStack would be for it to print output that is meaningful to the end user running it. Simply printing a verbose list of every single shell command executed is one of the most unkind things you can do to a user. I’d like to see

# ./devstack.sh

* Cloning GIT repositories

- 1/10 nova

- 2/10 cinder

- 3/10 quantum

* Installing RPMs using YUM

- 1/30 python-pip

- 2/30 libvirt

- 3/30 libvirt-client

* Installing Python packages to $DEST/vroot/lib/python-2.7/site-packages using PIP

- 1/24 WebOb

- 2/24 ampqlib

- 3/24 boto

* Creating database schemas

- 1/10 nova

- 2/10 cinder

- 3/10 quantum

By all means still save the full list of every shell command and their output to a ‘devstack.log’ file for troubleshooting when things go wrong.

When first getting involved in the OpenStack project as a developer, most people will probably recommend use of DevStack. When I first started hacking, I skipped this because it wasn’t reliable on Fedora at that time, but these days it works just fine and there are even basic instructions for DevStack on Fedora. Last week I decided to finally give DevStack a go, since my hand-crafted dev environment was getting kind of nasty. The front page on the DevStack website says it is only supported on Fedora 16, but don’t let that put you off; aside from one bug which does not appear distro specific, it all seemed to work correctly. What follows is an overview of what I did / learnt

Setting up the virtual machine

I don’t like really like letting scripts like DevStack mess around with my primary development environment, particularly when there is little-to-no-documentation about what changes they will be making and they ask for unrestricted sudo (sigh) privileges ! Thus running DevStack inside a virtual machine was the obvious way to go. Yes, this means actual VMs run by Nova will be forced to use plain QEMU emulation (or nested KVM if you are brave), but for dev purposes this is fine, since the VMs don’t need todo anything except boot. My host is Fedora 17, and for simplicity I decided that my guest dev environment will also be Fedora 17. With that decided installing the guest was a simple matter of running virt-install on the host as root

# virt-install --name f17x86_64 --ram 2000 --file /var/lib/libvirt/images/f17x86_64.img --file-size 20 --accelerate --location http://mirror2.hs-esslingen.de/fedora/linux//releases/17/Fedora/x86_64/os/ --os-variant fedora17

I picked the defaults for all installer options, except for reducing the swap file size down to a more sensible 500 MB (rather than the 4 G it suggested). NB if copying this, you probably want to change the URL used to point to your own best mirror location.

Once installation completed, run through the firstboot wizard, creating yourself an unprivileged user account, then login as root. First add the user to the wheel group, to enable it to run sudo commands:

# gpasswd -a YOURUSERNAME wheel

The last step before getting onto DevStack is to install GIT

# yum -y install git

Setting up DevStack

The recommended way to use DevStack, is to simply check it out of GIT and run the latest code available. I like to keep all my source code checkouts in one place, so I’m using $HOME/src/openstack for this project

$ mkdir -p $HOME/src/openstack

$ cd $HOME/src/openstack

$ git clone git://github.com/openstack-dev/devstack.git

Arguably you can now just kick off the stack.sh script at this point, but there are some modifications that are a good idea to do. This involves creating a “localrc” file in the top level directory of the DevStack checkout

$ cd devstack

$ cat > localrc <<EOF

# Stop DevStack polluting /opt/stack

DESTDIR=$HOME/src/openstack

# Switch to use QPid instead of RabbitMQ

disable_service rabbit

enable_service qpid

# Replace with your primary interface name

HOST_IP_IFACE=eth0

PUBLIC_INTERFACE=eth0

VLAN_INTERFACE=eth0

FLAT_INTERFACE=eth0

# Replace with whatever password you wish to use

MYSQL_PASSWORD=badpassword

SERVICE_TOKEN=badpassword

SERVICE_PASSWORD=badpassword

ADMIN_PASSWORD=badpassword

# Pre-populate glance with a minimal image and a Fedora 17 image

IMAGE_URLS="http://launchpad.net/cirros/trunk/0.3.0/+download/cirros-0.3.0-x86_64-uec.tar.gz,http://berrange.fedorapeople.org/images/2012-11-15/f17-x86_64-openstack-sda.qcow2"

EOF

With the localrc created, now just kick off the stack.sh script

$ ./stack.sh

At time of writing there is a bug in DevStack which will cause it to fail to complete correctly – it is checking for existence paths before it has created them. Fortunately, just running it for a second time is a simple workaround

$ ./unstack.sh

$ ./stack.sh

From a completely fresh Fedora 17 desktop install, stack.sh will take a while to complete, as it installs a large number of pre-requisite RPMs and downloads the appliance images. Once it has finished it should tell you what URL the Horizon web interface is running on. Point your browser to it and login as “admin” with the password provided in your localrc file earlier.

Because we told DevStack to use $HOME/src/openstack as the base directory, a small permissions tweak is needed to allow QEMU to access disk images that will be created during testing.

$ chmod o+rx $HOME

Note, that SELinux can be left ENFORCING, as it will just “do the right thing” with the VM disk image labelling.

UPDATE: if you want to use the Horizon web interface, then you do in fact need to set SELinux to permissive mode, since Apache won’t be allowed to access your GIT checkout where the Horizon files live.

$ sudo su -

# setenforce 0

# vi /etc/sysconfig/selinux

...change to permissive...

UPDATE:If you want to use Horizon, you must also manually install Node.js from a 3rd party repositoryh, because it is not yet included in Fedora package repositories:

# yum localinstall --nogpgcheck http://nodejs.tchol.org/repocfg/fedora/nodejs-stable-release.noarch.rpm

# yum -y install nodejs nodejs-compat-symlinks

# systemctl restart httpd.service

Testing DevStack

Before going any further, it is a good idea to make sure that things are operating somewhat normally. DevStack has created an file containing the environment variables required to communicate with OpenStack, so load that first

$ . openrc

Now check what images are available in glance. If you used the IMAGE_URLS example above, glance will have been pre-populated

$ glance image-list

+--------------------------------------+---------------------------------+-------------+------------------+-----------+--------+

| ID | Name | Disk Format | Container Format | Size | Status |

+--------------------------------------+---------------------------------+-------------+------------------+-----------+--------+

| 32b06aae-2dc7-40e9-b42b-551f08e0b3f9 | cirros-0.3.0-x86_64-uec-kernel | aki | aki | 4731440 | active |

| 61942b99-f31c-4155-bd6c-d51971d141d3 | f17-x86_64-openstack-sda | qcow2 | bare | 251985920 | active |

| 9fea8b4c-164b-4f54-8e74-b53966e858a6 | cirros-0.3.0-x86_64-uec-ramdisk | ari | ari | 2254249 | active |

| ec3e9b72-0970-44f2-b442-58d0042448f7 | cirros-0.3.0-x86_64-uec | ami | ami | 25165824 | active |

+--------------------------------------+---------------------------------+-------------+------------------+-----------+--------+

Incidentally the glance sort ordering is less than helpful here – it appears to be sorting based on the UUID strings rather than the image names :-(

Before booting a instance, Nova likes to be given an SSH public key, which it will inject into the guest filesystem to allow admin login

$ nova keypair-add --pub-key $HOME/.ssh/id_rsa.pub mykey

Finally an image can be booted

$ nova boot --key-name mykey --image f17-x86_64-openstack-sda --flavor m1.tiny f17demo1

+------------------------+--------------------------------------+

| Property | Value |

+------------------------+--------------------------------------+

| OS-DCF:diskConfig | MANUAL |

| OS-EXT-STS:power_state | 0 |

| OS-EXT-STS:task_state | scheduling |

| OS-EXT-STS:vm_state | building |

| accessIPv4 | |

| accessIPv6 | |

| adminPass | NsddfbJtR6yy |

| config_drive | |

| created | 2012-11-19T15:00:51Z |

| flavor | m1.tiny |

| hostId | |

| id | 6ee509f9-b612-492b-b55b-a36146e6833e |

| image | f17-x86_64-openstack-sda |

| key_name | mykey |

| metadata | {} |

| name | f17demo1 |

| progress | 0 |

| security_groups | [{u'name': u'default'}] |

| status | BUILD |

| tenant_id | dd3d27564c6043ef87a31404aeb01ac5 |

| updated | 2012-11-19T15:00:55Z |

| user_id | 72ae640f50434d07abe7bb6a8e3aba4e |

+------------------------+--------------------------------------+

Since we’re running QEMU inside a KVM guest, booting the image will take a little while – several minutes or more. Just keep running the ‘nova list’ command to keep an eye on it, until it shows up as ACTIVE

$ nova list

+--------------------------------------+----------+--------+------------------+

| ID | Name | Status | Networks |

+--------------------------------------+----------+--------+------------------+

| 6ee509f9-b612-492b-b55b-a36146e6833e | f17demo1 | ACTIVE | private=10.0.0.2 |

+--------------------------------------+----------+--------+------------------+

Just to prove that it really is working, login to the instance with SSH

$ ssh ec2-user@10.0.0.2

The authenticity of host '10.0.0.2 (10.0.0.2)' can't be established.

RSA key fingerprint is 9a:73:e5:1a:39:e2:f7:a5:10:a7:dd:bc:db:6e:87:f5.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added '10.0.0.2' (RSA) to the list of known hosts.

[ec2-user@f17demo1 ~]$ sudo su -

[root@f17demo1 ~]#

Working with DevStack

The DevStack setup runs all the python services under a screen session. To stop/start individual services, attach to the screen session with the ‘rejoin-stack.sh’ script. Each service is running under a separate screen “window”. Switch to the window containing the service to be restarted, and just Ctrl-C it and then use bash history to run the same command again.

$ ./rejoin-stack.sh

Sometimes the entire process set will need to be restarted. In this case, just kill the screen session entirely, which causes all the OpenStack services to go away. Then the same ‘rejoin-stack.sh’ script can be used to start them all again.

One annoyance is that unless you have the screen session open, the debug messages from Nova don’t appear to end up anywhere useful. I’ve taken to editing the file “stack-screen” to make each service log to a local file in its checkout. eg I changed

stuff "cd /home/berrange/src/openstack/nova && sg libvirtd /home/berrange/src/openstack/nova/bin/nova-compute"

to

stuff "cd /home/berrange/src/openstack/nova && sg libvirtd /home/berrange/src/openstack/nova/bin/nova-compute 2>&1 | tee nova-compute.log"

The Folsom release of OpenStack has been out for a few weeks now, and I had intended to write this post much earlier, but other things (moving house, getting married & travelling to LinuxCon Europe / KVM Forum all in the space of a month) got in the way. There are highlighted release notes, but I wanted to give a little more detail on some of the changes I was involved with making to the libvirt driver and what motivated them.

XML configuration

First off was a change in the way Nova generates libvirt XML configurations. Previously the libvirt driver in Nova used the Cheetah templating system to generate its XML configurations. The problem with this is that there was alot of information that needed to be passed into the template as parameters, so Nova was inventing a adhoc configuration format for libvirt guest config internally which then was further translated into proper guest config in the template. The resulting code was hard to maintain and understand, because the logic for constructing the XML was effectively spread across both the template file and the libvirt driver code with no consistent structure. Thus the first big change that went into the libvirt driver during Folsom was to introduce a formal set of python configuration objects to represent the libvirt XML config. The libvirt driver code now directly populates these config objects with the required data, and then simply serializes the objects to XML. The use of Cheetah has been completely eliminated, and the code structure is clarified significantly as a result. There is a wiki page describing this in a little more detail.

CPU model configuration

The primary downside from the removal of the Cheetah templating, is that it is no longer possible for admins deploying Nova to make adhoc changes to the libvirt guest XML that is used. Personally I’d actually argue that this is a good thing, because the ability to make adhoc changes meant that there was less motivation for directly addressing the missing features in Nova, but I know plenty of people would disagree with this view :-) It was quickly apparent that the one change a great many people were making to the libvirt XML config was to specify a guest CPU model. If no explicit CPU model is requested in the guest config, KVM will start with a generic, lowest common denominator model that will typically work everywhere. As can be expected, this generic CPU model is not going to offer optimal performance for the guests. For example, if your host has shiny new CPUs with builtin AES encryption instructions, the guest is not going to be able to take advantage of them. Thus the second big change in the Nova libvirt driver was to introduce explicit support for configuration the CPU model. This involves two new Nova config parameters, libvirt_cpu_mode which chooses between “host-model”, “host-passthrough” and “custom”. If mode is set to “custom”, then the libvirt_cpu_model parameter is used to specify the name of the custom CPU that is required. Again there is a wiki page describing this in a little more details.

Once the ability to choose CPU models was merged, it was decided that the default behaviour should also be changed. Thus if Nova is configured to use KVM as its hypervisor, then it will use the “host-model” CPU mode by default. This causes the guest CPU model to be a (almost) exact copy of the host CPU model, offering maximum out of the box performance. There turned out to be one small wrinkle in this choice when using nested KVM though. Due to a combination of problems in libvirt and KVM, use of “host-model” fails for nested KVM. Thus anyone using nested KVM needs to set libvirt_cpu_model=”none” as a workaround for now. If you’re using KVM on bare metal everything should be fine, which is of course the normal scenario for production deployments.

Time keeping policy

Again on the performance theme, the libvirt Nova driver was updated to set time time keeping policies for KVM guests. Virtual machines on x86 have a number of timers available including the PIT, RTC, PM-Timer, HPET. Reliable timers are one of the hardest problems to solve in full machine virtualization platforms, and KVM is no exception. If all comes down to the question of what to do when the hypervisor cannot inject a timer interrupt at the correct time, because a different guest is running. There are a number of policies available, inject the missed tick as soon as possible, merged all missed ticks into 1 and deliver it as soon as possible, temporarily inject missed ticks at a higher rate than normal to “catch up”, or simply discard the missed tick entirely. It turns out that Windows 7 is particularly sensitive to timers and the default KVM policies for missing ticks were causing frequent crashes, while older Linux guests would often experience severe time drift. Research validated by the oVirt project team has previously identified an optimal set of policies that should keep the majority of guests happy. Thus the libvirt Nova driver was updated to set explicit policies for time keeping with the PIT and RTC timers when using KVM, which should make everything time related much more reliable.

Libvirt authentication

The libvirtd daemon can be configured with a number of different authentication schemes. Out of the box it will use PolicyKit to authenticate clients, and thus Nova packages on Fedora / RHEL / EPEL include a policykit configuration file which grants Nova the ability to connect to libvirt. Administrators may, however, decide to use a different configuration scheme, for example, SASL. If the scheme chosen required a username+password, there was no way for Nova’s libvirt driver to provide these authentication credentials. Fortunately the libvirt client has the ability to lookup credentials in a local file. Unfortunately the way Nova connected to libvirt prevented this from working. Thus the way the Nova libvirt driver used openAuth() was fixed to allow the default credential lookup logic to work. It is now possible to require authentication between Nova and libvirt thus:

# augtool -s set /files/etc/libvirt/libvirtd.conf/auth_unix_rw sasl

Saved 1 file(s)

# saslpasswd -a libvirt nova

Password: XYZ

Again (for verification): XYZ

# su – nova -s /bin/sh

$ mkdir -p $HOME/.config/libvirt

$ cat > $HOME/.config/libvirt/auth.conf <<EOF

[credentials-nova]

authname=nova

password=XYZ

[auth-libvirt-localhost]

credentials=nova

EOF

Other changes

Obviously I was not the only person working on the libvirt driver in Folsom, many others contributed work too. Leander Beernaert provided an implementation of the ‘nova diagnostics’ command that works with the libvirt driver, showing the virtual machine cpu, memory, disk and network interface utilization statistics. Pádraig Brady improved the performance of migration, by sending the qcow2 image between hosts directly, instead of converting it to raw file, sending that, and then converting it back to qcow2. Instead of transferring 10 G of raw data, it can now send just the data actually used which may be as little as a few 100 MB. In his test case, this reduced the time to migrate from 7 minutes to 30 seconds, which I’m sure everyone will like to hear :-) Pádraig also optimized the file injection code so that it only mounts the guest image once to inject all data, instead of mounting it separately for each injected item. Boris Filippov contributed support for storing VM disk images in LVM volumes, instead of qcow2 files, while Ben Swartzlander contributed support for using NFS files as the backing for virtual block volumes. Vish updated the way libvirt generates XML configuration for disks, to include the “serial” property against each disk, based on the nova volume ID. This allows the guest OS admin to reliably identify the disk in the guest, using the /dev/disk/by-id/virtio-<volume id> paths, since the /dev/vdXXX device numbers are pretty randomly assigned by the kernel.

Not directly part of the libvirt driver, but Jim Fehlig enhanced the Nova VM schedular so that it can take account of the hypervisor, architecture and VM mode (paravirt vs HVM) when choosing what host to boot an image on. This makes it much more practical to run mixed environments of say, Xen and KVM, or Xen fullvirt vs Xen paravirt, or Arm vs x86, etc. When uploading an image to glance, the admin can tag it with properties specifying the desired hypervisor/architecture/vm_mode. The compute drivers then report what combinations they can support, and the scheduler computes the intersection to figure out which hosts are valid candidates for running the image.

When launching a virtual machine, Nova has the ability to inject various files into the disk image immediately prior to boot up. This is used to perform the following setup operations:

- Add an authorized SSH key for the root account

- Configure init to reset SELinux labelling on /root/.ssh

- Set the login password for the root account

- Copy data into a number of user specified files

- Create the meta.js file

- Configure network interfaces in the guest

This file injection is handled by the code in the nova.virt.disk.api module. The code which does the actual injection is designed around the assumption that the filesystem in the guest image can be mapped into a location in the host filesystem. There are a number of ways this can be done, so Nova has a pluggable API for mounting guest images in the host, defined by the nova.virt.disk.mount module, with the following implementations:

- Loop – Use losetup to create a loop device. Then use kpartx to map the partitions within the device, and finally mount the designated partition. Alternatively on new enough kernels the loop device’s builtin partition support is used instead of kpartx.

- NBD – Use qemu-nbd to run a NBD server and attach with the kernel NBD client to expose a device. Then mapping partitions is handled as per Loop module

- GuestFS – Use libguestfs to inspect the image and setup a FUSE mount for all partitions or logical volumes inside the image.

The Loop module can only handle Raw format files, while the NBD module can handle any format that QEMU supports. While they have the ability to access partitions, the code handling this is very dumb. It requires the Nova global ‘libvirt_inject_partition’ config parameter to specify which partition number to inject. The result is that every image you upload to glance must be partitioned in exactly the same way. Much better would be if it used a metadata parameter associated with the image. The GuestFS module is much more advanced and inspects the guest OS to figure out arbitrarily partitioned images and even LVM based images.

Nova has a “img_handlers” configuration parameter which defines the order in which the 3 mount modules above are to be tried. It tries to mount the image with each one in turn, until one suceeds. This is quite crude code really – it has already been hacked to avoid trying the Loop module if Nova knows it is using QCow2. It has to be changed by the Nova admin if they’re using LXC, otherwise you can end up using KVM with LXC guests which is probably not what you want. The try-and-fallback paradigm also has the undesirable behaviour of masking errors that you would really rather consider fatal to the boot process.

As mentioned earlier, the file injection code uses the mount modules to map the guest image filesystem into a temporary directory in the host (such as /tmp/openstack-XXXXXX). It then runs various commands like chmod, chown, mkdir, tee, etc to manipulate files in the guest image. Of course Nova runs as an unprivileged user, and the guest files to be changed are typically owned as root. This means all the file injection commands need to run via Nova’s rootwrap utility to gain root privileges. Needless to say, this has the undesirable consequence that the code injecting files into a guest image in fact has privileges that allow it to write to arbitrary areas of the host filesystem. One mistake in handling symlinks and you have the potential for a carefully crafted guest image to result in compromise of the host OS. It should come as little surprise that this has already resulted in a security vulnerability / CVE against Nova.

The solution to this class of security problems is to decouple the file injection code from the host filesystem. This can be done by introducing a “VFS” (Virtual File System) interface which defines a formal API for the various logical operations that need to be performed on a guest filesystem. With that it is possible to provide an implementation that uses the libguestfs native python API, rather than FUSE mounts. As well as being inherently more secure, avoiding the FUSE layer will improve performance, and allow Nova to utilize libguestfs APIs that don’t map into FUSE, such as its Augeas support for parsing config files. Nova still needs to work in scenarios where libguestfs is not available though, so a second implementation of the VFS APIs will be required based on the existing Loop/Nbd device mount approach. The security of the non-libguestfs support has not changed with this refactoring work, but de-coupling the file injection code from the host filesystem does make it easier to write unit tests for this code. The file injection code can be tested by mocking out the VFS layer, while the VFS implementations can be tested by mocking out the libguestfs or command execution APIs.

Incidentally if you’re wondering why Libguestfs does all its work inside a KVM appliance, its man page describes the security issues this approach protects against vs just directly mounting guest images on the host