I’ve written before about how virtualization causes pain wrt keyboard handling and about the huge number of scancode/keycode sets you have to worry about. Following on from that investigative work I completely rewrote GTK-VNC’s keycode handling, so it is able to correctly translate the keycodes it receives from GTK on Linux, Win32 and OS-X, even when running against a remote X11 server on a different platform. In doing so I made sure that the tables used for doing conversions between keycode sets were not just big arrays of magic numbers in the code, as is common practice across the kernel or QEMU codebase. Instead GTK-VNC now has a CSV file containing the unadulterated mapping data along with a simple script to split out mapping tables. This data file and script has already been reused to solve the same keycode mapping problem in SPICE-GTK.

Fast-forward a year and a libvirt developer from Fujitsu is working on a patch to wire up QEMU’s “sendkey” monitor command to a formal libvirt API. The first design question is how should the API accept the list of keys to be injected to the guest. The QEMU monitor command accepts a list of keycode names as strings, or as keycode values as hex-encoded strings. The QEMU keycode values come from what I term the “RFB” codeset, which is just the XT codeset with a slightly unusual encoding of extended keycodes. VirtualBox meanwhile has an API which wants integer keycode values, from the regular XT codeset.

One of the problems with the XT codeset is that no one can ever quite agree on what is the official way to encode extended keycodes, or whether it is even possible to encode certain types of key. There is also a usability problem with having the API require a lowlevel hardware oriented keycode set as input, in that as an application developer you might know what Win32 virtual keycode you want to generate, but have no idea what the corresponding XT keycode is. It would be preferable if you could simply directly inject a Win32 keycode to a Windows guest, or directly inject a Linux keycode to a Linux guest, etc.

After a little bit of discussion we came to the conclusion that the libvirt API should accept an array of integer keycodes, along with a enum parameter specifying what keycode set they belong to. Internally libvirt would then translate from whatever keycode set the application used, to the keycode set required by the hypervisor’s own API. Thus we got an API that looks like:

typedef enum {

VIR_KEYCODE_SET_LINUX = 0,

VIR_KEYCODE_SET_XT = 1,

VIR_KEYCODE_SET_ATSET1 = 2,

VIR_KEYCODE_SET_ATSET2 = 3,

VIR_KEYCODE_SET_ATSET3 = 4,

VIR_KEYCODE_SET_OSX = 5,

VIR_KEYCODE_SET_XT_KBD = 6,

VIR_KEYCODE_SET_USB = 7,

VIR_KEYCODE_SET_WIN32 = 8,

VIR_KEYCODE_SET_RFB = 9,

VIR_KEYCODE_SET_LAST,

} virKeycodeSet;

int virDomainSendKey(virDomainPtr domain,

unsigned int codeset,

unsigned int holdtime,

unsigned int *keycodes,

int nkeycodes,

unsigned int flags);

As with all libvirt APIs, this is also exposed in the virsh command line tool, via a new “send-key” command. As you might expect, this accepts a list of integer keycodes as parameters, along with a keycode set name. If the keycode set is omitted, we are assuming use of the Linux keycode set by default. To be slightly more user friendly though, for the Linux, Win32 & OS-X keycode sets, we also support symbolic keycode names as an alternative to the integer values. These names are simply the name of the #define constant from corresponding header file.

Some examples of how to use the new virsh command are

# send three strokes 'k', 'e', 'y', using xt codeset

virsh send-key dom --codeset xt 37 18 21

# send one stroke 'right-ctrl+C'

virsh send-key dom KEY_RIGHTCTRL KEY_C

# send a tab, held for 1 second

virsh send-key --holdtime 1000 0xf

So when interacting with virtual guests you now have a choice of how to send fake keycodes. If you have a VNC or SPICE connection directly to the guest in question, you can inject keycodes over that channel, while if you have a libvirt connection to the hypervisor you can inject keycodes over that channel.

A couple of years ago Dan Walsh introduced the SELinux sandbox which was a way to confine what resources an application can access using SELinux and the Linux filesystem namespace functionality. Meanwhile we developed sVirt in libvirt to confine QEMU virutal machines, and QEMU itself has gained support for passing host filesystems straight through to the guest operating system, using a VirtIO based transport for the 9p filesystem. This got me thinking about whether it was now practical to create a sandbox based on QEMU, or rather KVM by booting a guest with a root filesystem pointing to the host’s root filesystem (readonly of course), combined with a couple of overlays for /tmp and /home, all protected by sVirt.

One prominent factor in the practicality is how much time the KVM and kernel startup sequences add to the overall execution time of the command being sandboxed. From Richard Jones‘ work on libguestfs I know that it is possible to boot to a functioning application inside KVM in < 5 seconds. The approach I take with 9pfs has a slight advantage over libguestfs because it does not occur the initial (one-time only per kernel version) delay for building a virtual appliance based on the host filesystem, since we’re able to direct access the host filesystem from the guest. The fine details will have to wait for a future blog post, but suffice to say, a stock Fedora kernel can be made to boot to the point of exec()ing the ‘init’ binary in the ramdsisk in ~0.6 seconds, and the custom ‘init’ binary I use for mounting the 9p filesystems takes another ~0.2 seconds, giving a total boot time of 0.8 seconds.

Boot up time, however, is only one side of the story. For some application sandboxing scenarios, the shutdown time might be just as important as startup time. I naively thought that the kernel shutdown time would be unmeasurably short. It turns out I was wrong, big time. Timestamps on the printk messages showed that the shutdown time was in fact longer than the bootup time ! The telling messages were:

[ 1.486287] md: stopping all md devices.

[ 2.492737] ACPI: Preparing to enter system sleep state S5

[ 2.493129] Disabling non-boot CPUs ...

[ 2.493129] Power down.

[ 2.493129] acpi_power_off called

which point a finger towards the MD driver. I was sceptical that the MD driver could be to blame, since my virtual machine does not have any block devices at all, let alone MD devices. To be sure though, I took a look at the MD driver code to see just what it does during kernel shutdown. To my surprise the answer to blindly obvious:

static int md_notify_reboot(struct notifier_block *this, unsigned long code, void *x)

{

struct list_head *tmp;

mddev_t *mddev;

if ((code == SYS_DOWN) || (code == SYS_HALT) || (code == SYS_POWER_OFF)) {

printk(KERN_INFO "md: stopping all md devices.\n");

for_each_mddev(mddev, tmp)

if (mddev_trylock(mddev)) {

/* Force a switch to readonly even array

* appears to still be in use. Hence

* the '100'.

*/

md_set_readonly(mddev, 100);

mddev_unlock(mddev);

}

/*

* certain more exotic SCSI devices are known to be

* volatile wrt too early system reboots. While the

* right place to handle this issue is the given

* driver, we do want to have a safe RAID driver ...

*/

mdelay(1000*1);

}

return NOTIFY_DONE;

}

In other words, regardless of whether you actually have any MD devices, it’ll impose a fixed 1 second delay into your shutdown sequence :-(

With this kernel bug fixed, the total time my KVM sandbox spends running the kernel is reduced by more than 50%, from 1.9s to 0.9s. The biggest delay is now down to Seabios & QEMU which together take 2s to get from the start of QEMU main(), to finally jumping into the kernel entry point.

Traditionally, if you have manually launched a QEMU process, either as root, or as your own user, it will not be visible to/from libvirt’s QEMU driver. This is an intentional design decision because given an arbitrary QEMU process, it is very hard to determine what its current or original configuration is/was. Without knowing QEMU’s configuration, it is hard to reliably perform further operations against the guest. In general, this limitation has not proved a serious burden to users of libvirt, since there are variety of ways to launch new guests directly with libvirt whether graphical (virt-manager) or command line driven (virt-install).

There are always exceptions to the rule, though, and one group of users who have found this a problem is the QEMU/KVM developer community itself. During the course of developing & testing QEMU code, they often have need to quickly launch QEMU processes with a very specific set of command line arguments. This is hard to do with libvirt, since when there is a choice of which command line parameters to use for a feature, libvirt will pick one according to some predetermined rule. As an example, if you want to test something related to the old style ‘-drive’ parameters with QEMU, and libvirt is using the new style ‘-device + -drive’ parameters, you are out of luck & will not be able to force libvirt to use the old syntax. There are other features of libvirt that QEMU developers may well still want to take advantage of though like virt-top or virt-viewer. Thus it is desirable to have a way to launch QEMU with arbitrary command line arguments, but still use libvirt.

A little while ago we did introduce support for adding extra QEMU specific command line arguments in the guest XML configuration, using a separate namespace. This is not entirely sufficient, or satisfactory for the particular scenario outlined above. For this reason, we’ve now introduced a new QEMU-specific API into libvirt that allows the QEMU driver to attach to an externally launched QEMU process. This API is not in the main library, but rather in the separate libvirt-qemu.so library. Use of this library by applications is strongly discouraged and many distros will not supports its use in production deployments. It is intended primarily for developer / troubleshooting scenarios. This QEMU specific command is also exposed in virsh, via a QEMU specific command ‘qemu-attach‘. So now it is possible for the QEMU developers to launch a QEMU process and connect it to libvirt

$ qemu-kvm \

-cdrom ~/demo.iso \

-monitor unix:/tmp/myexternalguest,server,nowait \

-name myexternalguest \

-uuid cece4f9f-dff0-575d-0e8e-01fe380f12ea \

-vnc 127.0.0.1:1 &

$ QEMUPID=$!

$ virsh qemu-attach $QEMUPID

Domain myexternalguest attached to pid 14725

Once attached, most of the normal libvirt commands and tools will be able to at least see the guest. For example, query its status

$ virsh list

Id Name State

----------------------------------

1 myexternalguest running

$ virsh dominfo myexternalguest

Id: 1

Name: myexternalguest

UUID: cece4f9f-dff0-575d-0e8e-01fe380f12ea

OS Type: hvm

State: running

CPU(s): 1

CPU time: 15.1s

Max memory: 65536 kB

Used memory: 65536 kB

Persistent: no

Autostart: disable

Security model: selinux

Security DOI: 0

Security label: unconfined_u:unconfined_r:unconfined_qemu_t:s0-s0:c0.c1023 (permissive)

libvirt reverse engineers an XML configuration for the guest based on the command line arguments it finds for the process in /proc/$PID/cmdline and /proc/$PID/environ. This is using the same code as available via the virsh domxml-from-native command. The important caveat is that the QEMU process being attached to must not have had its configuration modified via the monitor. If that has been done, then the /proc command line will no longer match the current QEMU process state

$ virsh dumpxml myexternalguest

<domain type='kvm' id='1'>

<name>myexternalguest</name>

<uuid>cece4f9f-dff0-575d-0e8e-01fe380f12ea</uuid>

<memory>65536</memory>

<currentMemory>65536</currentMemory>

<vcpu>1</vcpu>

<os>

<type arch='i686' machine='pc-0.14'>hvm</type>

</os>

<features>

<acpi/>

<pae/>

</features>

<clock offset='utc'/>

<on_poweroff>destroy</on_poweroff>

<on_reboot>restart</on_reboot>

<on_crash>destroy</on_crash>

<devices>

<emulator>/usr/bin/qemu-kvm</emulator>

<disk type='file' device='cdrom'>

<source file='/home/berrange/demo.iso'/>

<target dev='hdc' bus='ide'/>

<readonly/>

<address type='drive' controller='0' bus='1' unit='0'/>

</disk>

<controller type='ide' index='0'>

<address type='pci' domain='0x0000' bus='0x00' slot='0x01' function='0x1'/>

</controller>

<input type='mouse' bus='ps2'/>

<graphics type='vnc' port='5901' autoport='no' listen='127.0.0.1'/>

<video>

<model type='cirrus' vram='9216' heads='1'/>

<address type='pci' domain='0x0000' bus='0x00' slot='0x02' function='0x0'/>

</video>

<memballoon model='virtio'>

<address type='pci' domain='0x0000' bus='0x00' slot='0x03' function='0x0'/>

</memballoon>

</devices>

<seclabel type='static' model='selinux' relabel='yes'>

<label>unconfined_u:unconfined_r:unconfined_t:s0-s0:c0.c1023</label>

</seclabel>

</domain>

Tools like virt-viewer will be able to attach to the guest just fine

$ ./virt-viewer --verbose myexternalguest

Opening connection to libvirt with URI

Guest myexternalguest is running, determining display

Guest myexternalguest has a vnc display

Opening direct TCP connection to display at 127.0.0.1:5901

Finally, you can of course kill the attached process

$ virsh destroy myexternalguest

Domain myexternalguest destroyed

The important caveats when using this feature are

- The guest config must not be modified using monitor commands between the time the QEMU process is started and when it is attached to the libvirt driver

- There must be a monitor socket for the guest using the ‘unix’ protocol as shown in the example above. The socket location does not matter, but it must be a server socket

- It is strongly recommended to specify a name using ‘-name’, otherwise libvirt will auto-assign a name based on the $PID

To re-inforce the earlier point, this feature is ONLY targetted at QEMU developers and other people who want to do ad-hoc testing/troubleshooting of QEMU with precise control over all command line arguments. If things break when using this feature, you get to keep both pieces. To re-inforce this, when attaching to an externally launched guest, it will be marked as tainted which may limit the level of support a distro / vendor provides. Anyone writing serious production quality virtualization applications should NEVER use this feature. It may make babies cry and kick cute kittens.

This feature will be available in the next release of libvirt, currently planned to be version 0.9.4, sometime near to July 31st, 2011

With World IPv6 Day last week there have been a few people trying to setup IPv6 connectivity for their virtual guests with libvirt and KVM. For those whose guests are using bridged networking to their LAN, there is really not much to say on the topic. If your LAN has IPv6 enabled and your virtualization host is getting a IPv6 address, then your guests can get IPv6 addresses in exactly the same manner, since they appear directly on the LAN. For those who are using routed / NATed networking with KVM, via the “default” virtual network libvirt creates out of the box, there is a little more work todo. That is what this blog posting will attempt to illustrate.

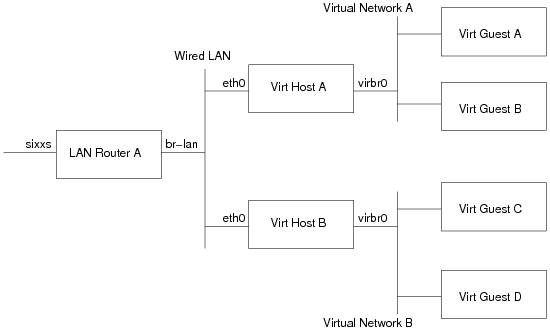

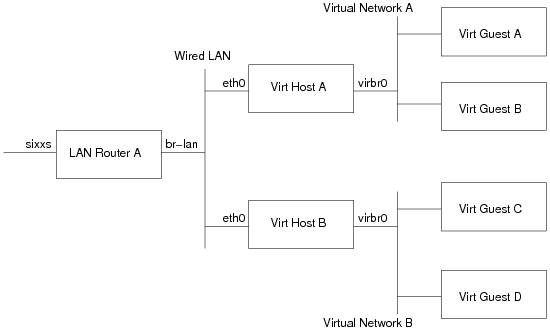

Network architecture

Before continuing further, it probably helps to have a simple network architecture diagram

- LAN Router A: This is the machine which provides IPv6 connectivity to machines on the wired LAN. In my case this is a LinkSys WRT 54GL, running OpenWRT, with an IPv6 tunnel + subnet provided by SIXXS

- Virt Host A/B: These are the 2 hosts which run virtual machines. They are initially getting their IPv6 addresses via stateless autoconf, thanks to the RADVD program on LAN Router A sending out route advertisements.

- Virtual Network A/B: These are the libvirt default virtual networks on Virt Hosts A/B respectively. Out of the box, they both come with a IPv4 only, NAT setup under 192.168.122.0/24 using bridge virbr0.

- Virt Guest A/B: These are the virtual machines running on Virt Host A

- Virt Guest C/D: These are the virtual machines running on Virt Host B

The goal is to provide IPv6 connectivity to Virt Guests A, B, C and D, such that every guest can talk to every other guest, every other LAN host and the internet. Every LAN host, should also be able to talk to every Virt Guest, and (firewall permitting) any machines on the entire IPv6 internet should be able to connect to any guest.

Initial network configuration

The initial configuration of the LAN is as follows (NB: my real network addresses have been substituted for fake ones). Details on this kind of setup can be found in countless howtos on the web, so I won’t get into details on how to configure your LAN/tunnel. All that’s important here is the network addressing in use

LAN Router A

The network interface for the AICCU tunnel to my SIXXS POP is called ‘sixxs’, and was assigned the address 2000:beef:cafe::1/64. The ISP also assigned a nice large subnet for use on the site 2000:dead:beef::/48. The interface for the Wired LAN is called ‘br-lan’. Individual networks are usually assigned a /64, so with the /48 assigned, I have enough addresses to setup 65336 different networks within my site. Skipping the ‘0’ network, for sake of clarity, we give the Wired LAN the second network, 2000:dead:beef:1::/64, and configure the interface ‘br-lan’ with the global IPv6 address 2000:dead:beef:1::1/64. It also has a link local address of fe80::200:ff:fe00:0/64. To allow hosts on the Wired LAN to get Ipv6 addresses, the router is also running the radvd daemon, advertising the network prefix. The radvd config on the router looks like

interface br-lan

{

AdvSendAdvert on;

AdvManagedFlag off;

AdvOtherConfigFlag off;

prefix 2000:dead:beef:1::/64

{

AdvOnLink on;

AdvAutonomous on;

AdvRouterAddr off;

};

};

The address/route configuration looks like this

# ip -6 addr

1: lo: <LOOPBACK,UP>

inet6 ::1/128 scope host

2: br-lan: <BROADCAST,MULTICAST,UP>

inet6 fe80::200:ff:fe00:0/64 scope link

inet6 2000:dead:beef:1::1/64 scope global

# ip -6 route

2000:beef:cafe::/64 dev sixxs metric 256 mtu 1280 advmss 1220

2000:dead:beef:1::/64 dev br-lan metric 256 mtu 1500 advmss 1440

fe80::/64 dev br-lan metric 256 mtu 1500 advmss 1440

ff00::/8 dev br-lan metric 256 mtu 1500 advmss 1440

default via 2000:beef:cafe:1 dev sixxs metric 1024 mtu 1280 advmss 1220

Virt Host A

The network interface for the wired LAN is called ‘eth0’ and is getting an address via stateless autoconf. The NIC has a MAC address 00:11:22:33:44:0a, so it has a link-local IPv6 address of fe80::211:22ff:fe33:440a/64, and via auto-config gets a global address of 2000:dead:beef:1:211:22ff:fe33:440a/64. The address/route configuration looks like

# ip -6 addr

1: lo: mtu 16436

inet6 ::1/128 scope host

3: wlan0: mtu 1500 qlen 1000

inet6 2000:dead:beaf:1:211:22ff:fe33:440a/64 scope global dynamic

inet6 fe80::211:22ff:fe33:440a/64 scope link

# ip -6 route

2000:dead:beaf:1::/64 dev wlan0 proto kernel metric 256 mtu 1500 advmss 1440

fe80::/64 dev wlan0 proto kernel metric 256 mtu 1500 advmss 1440

default via fe80::200:ff:fe00:0 dev wlan0 proto static metric 1024 mtu 1500 advmss 1440

Virt Host B

The network interface for the wired LAN is called ‘eth0’ and is getting an address via stateless autoconf. The NIC has a MAC address 00:11:22:33:44:0b, so it has a link-local IPv6 address of fe80::211:22ff:fe33:440b/64, and via auto-config gets a global address of 2000:dead:beef:1:211:22ff:fe33:440b/64.

# ip -6 addr

1: lo: mtu 16436

inet6 ::1/128 scope host

3: wlan0: mtu 1500 qlen 1000

inet6 2000:dead:beaf:1:211:22ff:fe33:440b/64 scope global dynamic

inet6 fe80::211:22ff:fe33:440b/64 scope link

# ip -6 route

2000:dead:beaf:1::/64 dev wlan0 proto kernel metric 256 mtu 1500 advmss 1440

fe80::/64 dev wlan0 proto kernel metric 256 mtu 1500 advmss 1440

default via fe80::200:ff:fe00:0 dev wlan0 proto static metric 1024 mtu 1500 advmss 1440

Adjusted network configuration

Both Virt Host A and B have a virtual network, so the first thing that needs to be done is to assign a IPv6 subnet for each of them. The /48 subnet has enough space to create 65336 /64 networks, so we just need to pick a couple more. In this we’ll assign 2000:dead:beef:a::/64 to the default virtual network on Virt Host A, and 2000:dead:beef:b::/64 to Virt Host B.

LAN Router A

The first configuration task is to tell the LAN router how each of these new networks can be reached. This requires adding a static route for each subnet, directing traffic to the link local address of the respective Virt Host. You might think you can use the global address of the virt host, rather than its link local address. I had tried that at first, but strange things happened, so sticking to the link local addresses seems best

2000:dead:beef:a::/64 via fe80::211:22ff:fe33:440a dev br-lan metric 256 mtu 1500 advmss 1440

2000:dead:beef:b::/64 via fe80::211:22ff:fe33:440b dev br-lan metric 256 mtu 1500 advmss 1440

One other thing to beware of is that the ‘ip6tables’ FORWARD chain may have a rule which prevents forwarding of traffic. Make sure traffic can flow to/from the 2 new subnets. In my case I added a generic rule that allows any traffic that originates on br-lan, to be sent back on br-lan. Traffic from ‘sixxs’ (the public internet) is only allowed in if it is related to an existing connection, or a whitelisted host I own.

Virt Host A

Remember how all hosts on the wired LAN can automatically configure their own IPv6 addresses / routes using stateless autoconf, thanks to radvd. Well, unfortunately, now that the virt host is going to start routing traffic to/from the guest you can’t use autoconf anymore :-( So the first job is to alter the host eth0 configuration, so that it uses a statically configured IPv6 address for eth0, or gets an address from DHCPv6. If you skip this bit, things may appear to work fine at first, but next time you reconnect to the LAN, autoconf will fail.

Now that the host is not using autoconf, it is time to reconfigure libvirt to add IPv6 to the virtual network. To do this, we stop the virtual network, and then edit its XML

# net-destroy default

# net-edit default

....vi launches..

In the editor, we need to insert a snippet of XML giving details of the subnet assigned to this host, 2000:dead:beef:a::/64. Again we pick address ‘1’ for the host interface, virbr0.

<ip family='ipv6' address='2000:dead:beef:a::1' prefix='64'/>

After exiting the editor, simply start the network again

# net-start default

If all went to plan, virbr0 will now have an IPv6 address, and a route will be present. There will also be an radvd process running on the host advertising the network.

/usr/sbin/radvd --debug 1 --config /var/lib/libvirt/radvd/default-radvd.conf --pidfile /var/run/libvirt/network/default-radvd.pid-bin

If you look at the auto-generated configuration file, it should contain something like

interface virbr0

{

AdvSendAdvert on;

AdvManagedFlag off;

AdvOtherConfigFlag off;

prefix 2a01:348:157:1::1/64

{

AdvOnLink on;

AdvAutonomous on;

AdvRouterAddr off;

};

};

Virt Host B

As with the previous Virt Host A, the first configuration task is to switch the host eth0 from using autoconf, to a static IPv6 address configuration, or DHCPv6. Then it is simply a case of running the same virsh commands, but using this host’s subnet, 2000:dead:beef:b::/64

<ip family='ipv6' address='2000:dead:beef:b::1' prefix='64'/>

The guest setup

At this stage, it should be possible to ping & ssh to the address of the ‘virbr0’ interface of each virt host, from anywhere on the wired LAN. If this isn’t working, or if ping works, but ssh fails, then most likely the LAN router has missing/incorrect routes for the new subnets, or there is a firewall blocking traffic on the LAN router, or Virt Host A/B. In particular check the FORWARD chain in ip6tables.

Assuming, this all works though, it should now be possible to start some guests on Virt Host A / B. In this case the guest will of course have a NIC configuration that uses the ‘default’ network:

<interface type='network'>

<mac address='52:54:00:e6:1f:01'/>

<source network='default'/>

</interface>

Starting up this guest, autoconfiguration should take place, resulting in it getting an address based on the virtual network prefix and the MAC address

# ip -6 addr

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 16436

inet6 ::1/128 scope host

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qlen 1000

inet6 2000:dead:beef:a:5054:ff:fee6:1f01/64 scope global dynamic

inet6 fe80::5054:ff:fee6:1f01/64 scope link

# ip -6 route

2000:dead:beef:a::/64 dev eth0 proto kernel metric 256 mtu 1500 advmss 1440

fe80::/64 dev eth0 proto kernel metric 256 mtu 1500 advmss 1440

default via fe80::211:22ff:fe33:440a dev eth0 proto kernel metric 1024 mtu 1500 advmss 1440

A guest running on Host A ought to be able to connect to a guest on Host B, and vica-verca, and any host on the LAN should be able to connect to any guest.

A common question from developers/users new to lbvirt, is to wonder what the benefit of using libvirt is, over directly scripting/developing against QEMU/KVM. This blog posting attempts to answer that, by outlining features exposed in the libvirt QEMU/KVM driver that would not be automatically available to users of the lower level QEMU command line/monitor.

“All right, but apart from the sanitation, medicine, education, wine, public order, irrigation, roads, the fresh water system and public health, what have the Romans ever done for us?”

Insurance against QEMU/KVM being replaced by new $SHINY virt thing

Linux virtualization technology has been through many iterations. In the beginning there was User Mode Linux, which was widely used by many ISPs offering Linux virtual hosting. Then along came Xen, which was shipped by many enterprise distributions and replaced much usage of UML. QEMU has been around for a long time, but when KVM was added to the Linux kernel and integrated with QEMU, it became the new standard for Linux host virtualization, often replacing the usage of Xen. Application developers may think

“QEMU/KVM is the Linux virtualization standard for the future, so why do we need a portable API?”

To think this way is ignoring the lessons of history, which are that every virtualization technology that has existed in Linux thus far has been replaced or rewritten or obsoleted. The current QEMU/KVM userspace implementation may turn out to be the exception to the rule, but do you want to bet on that? There is already a new experimental KVM userspace application being developed by a group of LKML developers which may one day replace the current QEMU based KVM userspace. libvirt exists to provide application developers an insurance policy should this come to pass, by isolating application code from the specific implementation of the low level userspace infrastructure.

Long term stable API and XML format

The libvirt API is an append-only API, so once added an existing API wil never be removed or changed. This ensures that applications can expect to operate unchanged across multiple major RHEL releases, even if the underlying virtualization technology changes the way it operates. Likewise the XML format is append-only, so even if the underlying virt technology changes its configuration format, apps will continue to work unchanged.

Automatic compliance with changes in KVM command line syntax “best practice”

As new releases of KVM come out, the “best practice” recommendations for invoking KVM processes evolve. The libvirt QEMU driver attempts to always follow the latest “best practice” for invoking the KVM version it finds

Example: Disk specification changes

- v1: Original QEMU syntax:

-hda filename

- v2: RHEL5 era KVM syntax

-drive file=filename,if=ide,index=0

- v3: RHEL6 era KVM syntax

-drive file=filename,id=drive-ide0-0-0,if=none \

-device ide-drive,bus=ide.0,unit=0,drive=drive-ide0-0-0,id=ide-0-0-0

- v4: (possible) RHEL-7 era KVM syntax:

-blockdev ...someargs... \

-device ide-drive,bus=ide.0,unit=0,blockdev=bdev-ide0-0-0,id=ide-0-0-0

Isolation against breakage in KVM command line syntax

Although QEMU attempts to maintain compatibility of command line flags across releases, there may been cases where options have been removed / changed. This has typically occurred as a result of changes which were in the KVM branch, and then done differently when the code was merged back into the main QEMU GIT repository. libvirt detects what is required for the QEMU binary and uses the appropriate supported syntax:

Example: Boot order changes for virtio.

- v1: Original QEMU syntax (didn’t support virtio)

-boot c

- v2: RHEL5 era KVM syntax

-drive file=filename,if=virtio,index=0,boot=on

- v3: RHEL-6.0 KVM syntax

-drive file=filename,id=drive-virtio0-0-0,if=none,boot=on \

-device virtio-blk-pci,bus=pci0.0,addr=1,drive=drive-virtio0-0-0,id=virtio-0-0-0

- v4: RHEL-6.1 KVM syntax (boot=on was removed in the update, changing syntax wrt RHEL-6.0)

-drive file=filename,id=drive-virtio0-0-0,if=none,bootindex=1 \

-device virtio-blk-pci,bus=pci0.0,addr=1,drive=drive-virtio0-0-0,id=virtio-0-0-0

Transparently take advantage of new features in KVM

Some new features introduced in KVM can be automatically enabled / used by the libvirt QEMU driver without requiring changes to applications using libvirt. Applications thus benefit from these new features without expending any further development effort

Example: Stable machine ABI in RHEL-6.0

The application provided XML requests a generic ‘pc’ machine type. libvirt automatically queries KVM to determine the supported machines types and canonicalizes ‘pc’ to the stable versioned machine type ‘pc-0.12’ and preserves this in the XML configuration. Thus even if host is updated to KVM 0.13, existing guests will continue to run with the ‘pc-0.12’ ABI, and thus avoid potential driver breakage or guest OS re-activation. It also makes it is possible to migrate the guest between hosts running different versions of KVM.

Example: Stable PCI device addressing in RHEL-6.0

The application provided XML requests 4 PCI devices (balloon, 2 disks & a NIC). libvirt automatically assigns PCI addresses to these devices and ensures that every time the guest is launched the exact same PCI addresses are used for each device. It will also manage PCI addresses when doing PCI device hotplug and unplug, so the sequence boot; unplug nic; migrate will result in stable PCI addresses on the target host.

Automatic compliance with changes in KVM monitor syntax best practice

In the same way that KVM command line argument “best practice” changes between releases, so does the monitor “best practice”. The libvirt QEMU drivers aims to always be in compliance with the “best practice” recommendations for the version of KVM being used.

Example: monitor protocol changes

- v1: Human parsable monitor in RHEL5 era or earlier KVM

- v2: JSON monitor in RHEL-6.0

- v3: JSON monitor, with HMP passthrough in RHEL-6.1

Isolation against breakage in KVM monitor commands

Upstream QEMU releases have generally avoided changing monitor command syntax, but the KVM fork of QEMU has not been so lucky when merging changes back into mainline QEMU. Likewise, OS distros sometimes introduce custom commands in their branches.

Example: Method for setting VNC/SPICE passwords

- v1: RHEL-5 using the ‘change’ command only for VNC, or ‘set_ticket’ for SPICE

'change vnc 123456'

'set_ticket 123456'

- v2: RHEL-6.0 using the (non-upstream) ‘__com.redhat__set_password’ for SPICE or VNC

{ "execute": "__com.redhat__set_password", "arguments": { "protocol": "spice", "password": "123456", "expiration": "60" } }

- v4: RHEL-6.1 using the upstream ‘set_password’ and ‘expire_password’ commands

{ "execute": "set_password", "arguments": { "protocol": "spice", "password": "123456" } }

{ "execute": "expire_password", "arguments": { "protocol": "spice", "time": "+60" } }

Security isolation with SELinux / AppArmour

When all guests run under the same user ID, any single KVM process which is exploited by a malicious guest, will result in compromise of all guests running on the host. If the management software runs as the same user ID as the KVM processes, the management software can also be exploited.

sVirt integration in libvirt, ensures that every guest is assigned a dedicated security context, which strictly confines what resources it can access. Thus even if a guest can read/write files based on matching UID/GID, the sVirt layer will block access, unless the file / resource was explicitly listed in the guest configuration file.

Security isolation using POSIX DAC

A additional security driver uses traditional POSIX DAC capabilities to isolate guests from the host, by running as an unprivileged UID and GID pair. Future enhancements will run each individual guest under a dedicated UID.

Security isolation using Linux container namespaces

Future will take advantage of recent advances in Linux container namespace capabilities. Every QEMU process will be placed into a dedicate PID namespace, preventing QEMU seeing any processes on the system, should it be exploited. A dedicated network namespace will block access to all host network devices, only allow access to TAP device FDs, preventing an exploited guest from making arbitrary network connections to outside world. When QEMU gains support for a ‘fd:’ disk protocol, a dedicated filesystem namespace will provide a very secure chrooted environment where it can only use file descriptors passed in from libvirt. UID/GID namespaces will allow separation of QEMU UID/GID for each process, even though from the host POV all processes will be under the same UID/GID.

Avoidance of shell code for most operations

Use of the shell for invoking management commands is susceptible to exploit by users by providing data which gets interpreted as shell metadata characters. It is very hard to get escaping correctly applied, so libvirt has an advanced set of APIs for invoking commands which avoid all use of the shell. They are also able to guarantee that no file descriptors from the management layer leak down into the QEMU process where they could be exploited.

Secure remote access to management APIs

The libvirt local library API can be exposed over TCP using TLS, x509 certificates and/or Kerberos. This provides secure remote access to all KVM management APIs, with parity of functionality vs local API usage. The secure remote access is a validated part of the common criteria certifications

Operation audit trails

All operations where there is an association between a virtual machine and a host resource will result in one or more audit records being generated via the Linux auditing subsystem. This provides a clear record of key changes in the virtualization state. This functionality is typically a mandatory requirement for deployment into government / defence / financial organizations.

Security certification for Common Criteria

libvirt’s QEMU/KVM driver has gone through Common Criteria certification. This provides assurance for the host management virtualization stack, which again, is typically a mandatory requirement for deployment into government / defense related organizations. The core components in this certification are the sVirt security model and the audit subsystem integration

Integration with cgroups for resource control

All guests are automatically placed into cgroups. This allows control of CPU schedular priority between guests, block I/O bandwidth tunables, memory utilization tunables. The devices cgroups controller, provides a further line of defence blocking guest access to char & block devices on the host in the unlikely event that another layer of protection fails. The cpu accounting group will also enable querying of the per-physical CPU utilization for guests, not available via any traditional /proc file.

Integration with libnuma for memory/cpu affinity

QEMU does not provide any native support for controlling NUMA affinity via its command line. libvirt integrates with libnuma and/or sched_setaffinity for memory and CPU pinning respectively

CPU compatibility guarantees upon migration

libvirt directly models the host and guest CPU feature sets. A variety of policies are available to control what features become visible to the guest, and strictly validate compatibility of host CPUs across migration. Further APIs are provided to allow apps to query CPU compatibility ahead of time, and to enable computation of common feature sets between CPUs. No comparable API exists elsewhere.

Host network filtering of guest traffic

The libvirt network filter APIs allow definition of a flexible set of policies to control network traffic seen by guests. The filter configurations are automatically translated into a set of rules in ebtables, iptables and

ip6tables. The rules are automatically created when the guest starts and torn down when it stops. The rules are able to automatically learn the guest IP address through ARP traffic, or DHCP responses from a (trusted) DHCP server. The data model is closely aligned with the DMTF industry spec for virtual machine network filtering

Libvirt ships with a default set of filters which can be turned on to provide ‘clean’ traffic from the guest. ie it blocks ARP spoofing and IP spoofing by the guest which would otherwise DOS other guests on the network

Secure migration data transport

KVM does not provide any secure migration capability. The libvirt KVM driver adds support for tunnelling migration data over a secure libvirtd managed data channel

Management of encrypted virtual disks for guests

An API set for managing secret keys enables a secure model for deploying guests to untrusted shared storage. By separating management of the secret keys from the guest configuration, it is possible for the management app to ensure a guest can only be started by an authorized user on an authorized host, and prevent restarts once it shuts down. This leverages the Qcow2 data encryption capabilities and is the only method to securely deploy guest if the storage admins / network is not trusted.

Seamless migration of SPICE clients

The libvirt migration protocol automatically takes invokes the KVM graphics clients migration commands at the right point in the migration process to relocate connected clients

Integration with arbitrary lock managers

The lock manager API allows strict guarantees that a virtual machine’s disks can only be in use by once process in the cluster. Pluggable implementations enable integration with different deployment scenarios. Implementations available can use Sanlock, POSIX locks (fcntl), and in the future DLM (clustersuite). Leases can be explicitly provided by the management application, or zero-conf based on the host disks assigned to the guests

Host device passthrough

Integration between host device management APIs and KVM device assignment allows for safe passthrough of PCI devices from the host. libvirt automatically ensures that the device is detached from the host OS before guest startup, and re-attached after shutdown (or equivalent for hotplug/unplug). PCI topology validation ensures that the devices can be safely reset, with FLR, Power management reset, or secondary bus reset.

Host device API enumeration

APIs for querying what devices are present on the host. This enables applications wishing to perform device assignment, to determine which PCI devices are available on the host and how they are used by the host OS. eg to detect case where user asked to assign a device that is currently used as the host eth0 interface.

NPIV SAN management

The host device APIs allow creation and deletion of NPIV SCSI interfaces. This in turns provides access to sets of LUNs, whcih can be associated with individual guests. This allows the virtual SCSI HBA to “follow” the guest where ever it may be running

Core dump integration with “crash”

The libvirt core dump APIs allows a guest memory dump to be obtained and saved into a format which can then be analysed by the Linux crash tool.

Access to guest dmesg logs even for crashed guests

The libvirt API for peeking into guest memory regions allows the ‘virt-dmesg’ tool to extract recent kernel log messages from a guest. This is possible even when the guest kernel has crashed and thus no guest agent is otherwise available.

Cross-language accessibility of APIs.

Provides an stable API which allows KVM to be managed from C, Perl, Python, Java, C#, OCaml and PHP. Components of the management stack are not tied into all using the same language

Zero-conf host network access

Out of the box libvirt provides a NAT based network which allows guests to have outbound network access on a host, whether running wired, or wireless, or VPN, without needing any administrator setup ahead of time. While QEMU provides the ‘SLIRP’ networking mode, this has very low performance and does not integrate with the networking filters capabilities libvirt also provides, nor does it allow guests to communicate with each other

Storage APIs for managing common host storage configurations

Few APIs existing for managing storage on Linux hosts. libvirt provides a general purpose storage API which allows use of NFS shares; formatting & use of local filesystems; partitioning of block devices; creation of LVM volume groups and creation of logical volumes within them; enumeration of LUNs on SCSI HBAs; login/out of ISCSI servers & access to their LUNs; automatic discovery of NFS exports, LVM volume groups and iSCSI targets; creation and cloning of non-raw disks formats, optionally including encryption; enumeration of multipath devices.

Integration with common monitoring tools

Plugins are available for munin, nagios and collectd to enable performance monitoring of all guests running on a host / in a network. Additional 3rd party monitoring tools can easily integrate by using a readonly libvirt connection to ensure they don’t interfere with the functional operation of the system.

Expose information about virtual machines via SNMP

The libvirt SNMP agent allows information about guests on a host to be exposed via SNMP. This allows industry standard tools to report on what guests are running on each host. Optionally SNMP can also be used to control guests and make changes

Expose information & control of host via CIM

The libvirt CIM agent exposes information about, and allows control of, virtual machines, virtual networks and storage pools via the industry standard DMTF CIM schema for virtualization.

Expose information & control of host via AMQP/QMF

The libvirt AMQP agent (libvirt-qpid) exposes information about, and allows control of, virtual machines, virtual networks and storage pools via the QMF object modelling system, running on top of AMQP. This allows data center wide view & control of the state of virtualization hosts.